We’ve been using VEBA in our environment for quite some time now and it’s been working great! Since we don’t have a kubernetes stack, we run the prebuilt ova. This is also been going fine for the most part.

A few months ago we started noticing that the functions would just stop running. After a few weeks of troubleshooting off and on I stumbled upon a message in the VMware {Code} slack channel by William Lam. He stated that you could work around those kinds of issues by simply redeploying the event router in VEBA.

To do this you could SSH to the appliance with the root account and run the following commands:

|

|

This seemed to work for the most part. However, we started noticing soon after that sometimes we could see the events in the event router logs - but the functions would not run. When we redeployed the functions, everything seemed to work again as before. Over time, and as our environment got bigger, we did notice that we had to perform these actions more and more frequently.

Just relying on us to notice and then manually redeploy would not do it.

Cron jobs to the rescue!

After talking to William at VMware Explore in Barcelona, he indicated that there are much larger environments than ours running the pre built appliance without issues, but that it’s not unheard of.

In the end, I built a variation of the commands listed above and put that in the daily cron job. This way, the event router and the functions will get redeployed every day and that should allow us to keep enjoying the benefits of VEBA without too much hassle!

To get started, first you need to install cronie on the appliance. You can do this by simply running:

tdnf install -y cronie

Now that we have the package installed, we still need to activate the service.

systemctl enable crond

To verify that is actually running, you can always run

systemctl status crond

We want the functions and eventrouter to be redeployed daily. So we’re going to create a new file and put it under the /etc/cron.daily directory. This directory gets run every single day. You can check the command that actually triggers the run, by looking at the /etc/cron.d/dailyjobs file.

The content of our daily cron job is

|

|

Testing if it works

We could just wait one day and see if the cron job actually ran, but who has the patience for that? If you look at the /etc/cron.d/dailyjobs file, you will notice it specifies the command being used to run the files in the directory.

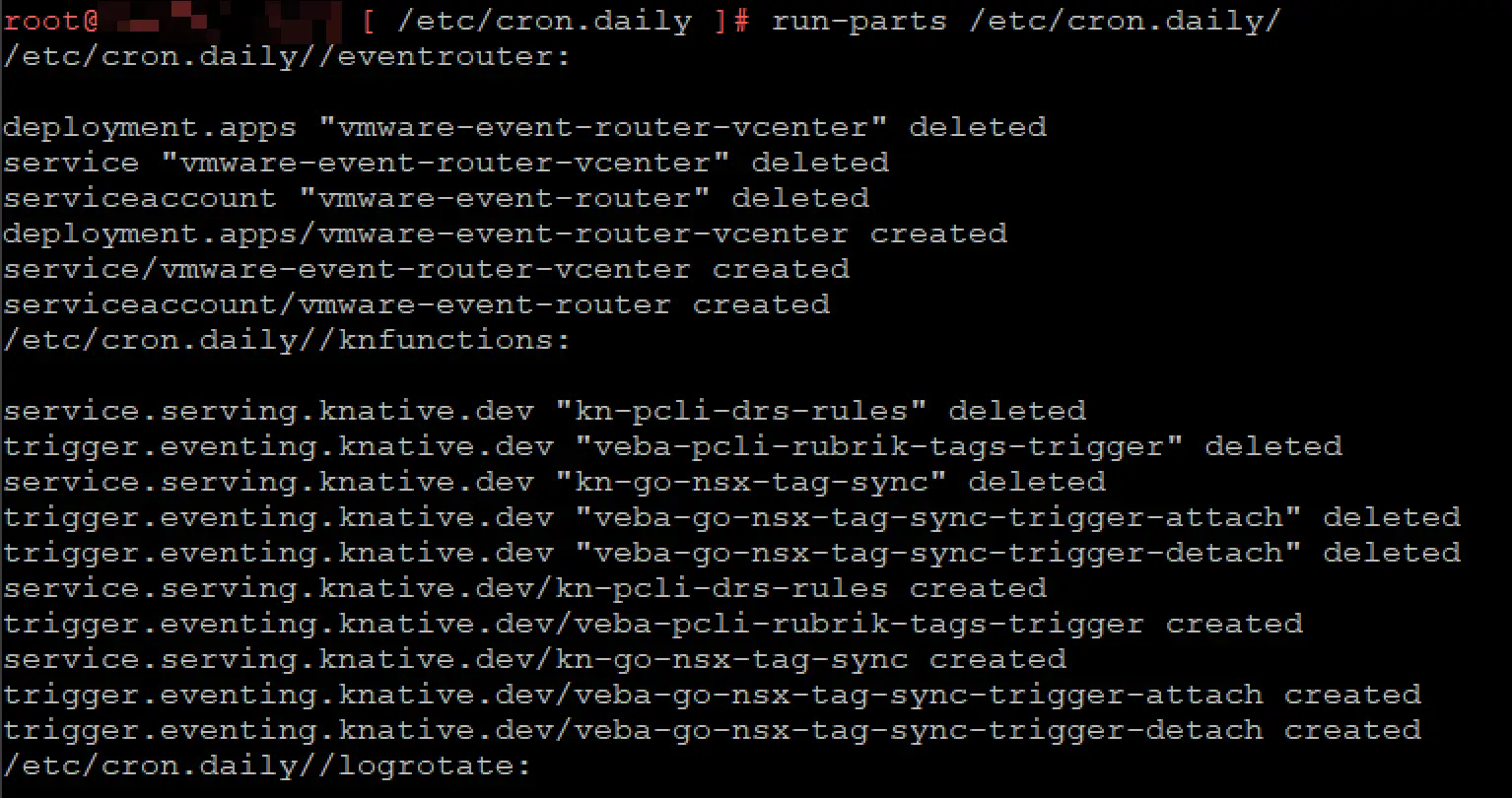

So after manually running this command

run-parts /etc/cron.daily

We got the following output

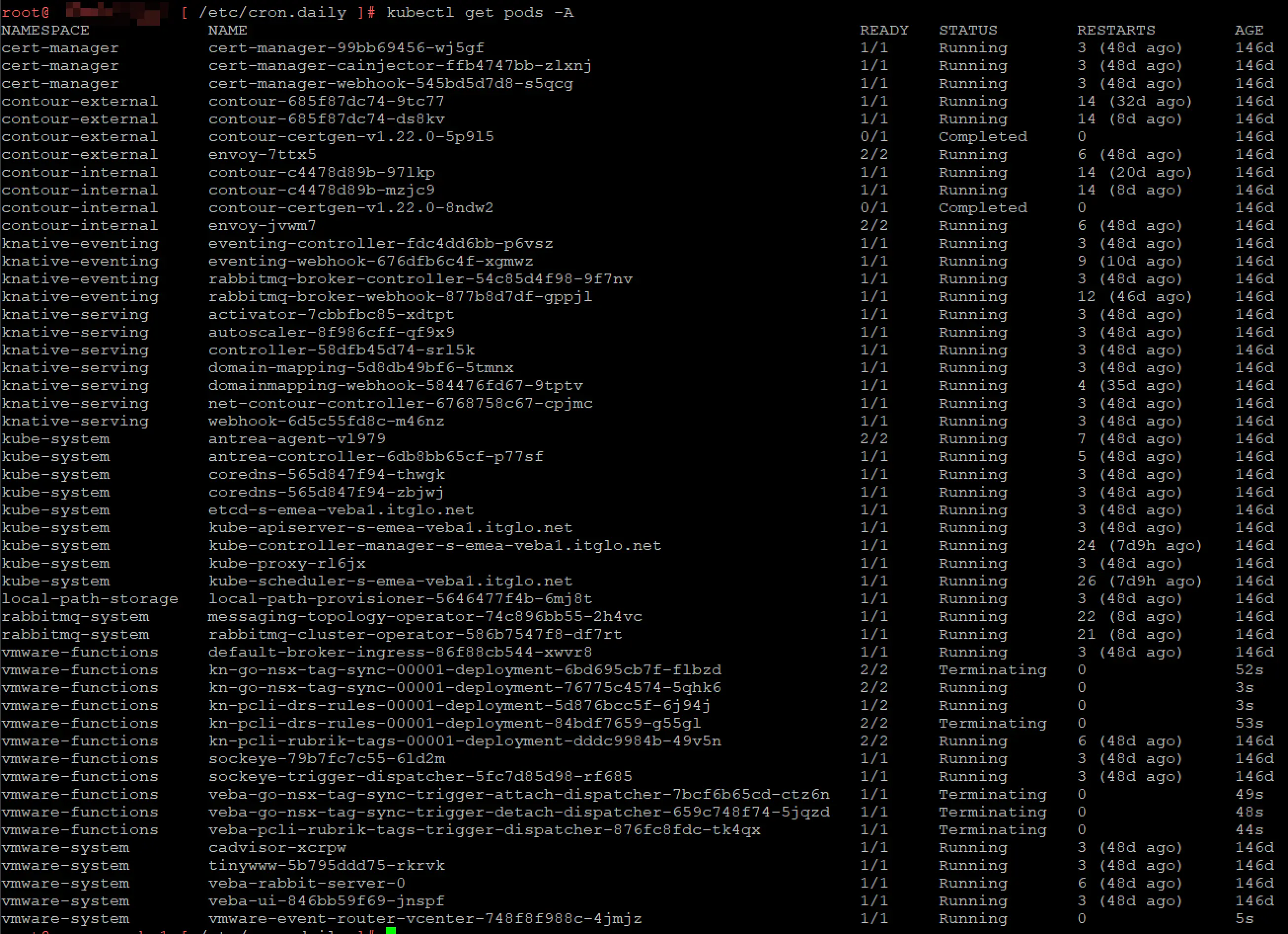

So everything seems to be running okay, let’s verify that the pods have actually been recreated. You can do this by running

kubctl get pods -A

Et voila, everything was redeployed as expected!

I hope this post can help you, if you do still have issues with VEBA, make sure you also sign up for the VMware {Code} slack end check out the VEBA channel there!